-

Home

Home

-

Chapters

Chapters

-

Introduction

Recall that functions in Julia are first-class objects, meaning that they can be manipulated as any other variable. [note] Equivalently, it's said that functions are first-class citizens. This enables us to define vectors of functions, create functions that return other functions, and more.

In particular, it enables the definition of high-order functions: functions that take another function as an argument. We've already worked with several examples of them, where anonymous functions were used as an input (e.g.,

mapandfilter).Next, we consider the conditions under which high-order functions are type-stable.

Functions as Arguments: The Issue

For the explanations, let's refer to the high-order function as the parent function, and the child function as the one passed as an argument.

Each function in Julia defines its own unique concrete type. [note] Additionally, each function is a subtype of an abstract type called

FunctionThis feature can become problematic for specialization of high-order functions. Specifically, it may cause a combinatorial explosion of specialized methods, as each unique child function would require separate compilation.To mitigate this issue, Julia has opted for not always specializing the methods of high-order functions. In some circumstances, this may result in a degraded performance, similar to working in the global scope. Therefore, it's important to pinpoint the scenarios where specialization is inhibited and monitor its consequences. In situations where performance is significantly impaired, it's still possible to explicitly induce specialization. Next, we explore the methods for achieving this.

Warning! Exercise caution when applying this method, as overly aggressive specialization can degrade performance severely. This explains why Julia's default approach is deliberately conservative. In particular, avoid specialization when your script calls high-order functions repeatedly with many different functions. [note] For discussions about specializing high-order functions, see here and here.

Warning! Exercise caution when applying this method, as overly aggressive specialization can degrade performance severely. This explains why Julia's default approach is deliberately conservative. In particular, avoid specialization when your script calls high-order functions repeatedly with many different functions. [note] For discussions about specializing high-order functions, see here and here.No Specialization and Its Solutions

To illustrate the scenario, suppose we want to transform a vector

xusingmap, and then sum its transformed elements. Additionally, assume we want to keep the transforming function generic, for which the transforming function is defined as an argumentf. The implementation of this task is shown below, where we illustrate its usage through the functionabsas the transformed functionf.No Specialization

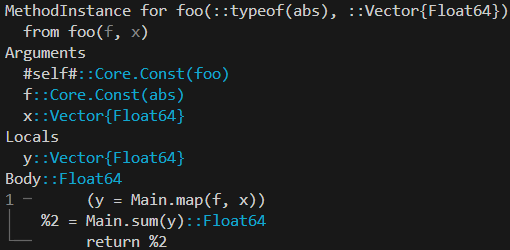

x = rand(100) function foo(f, x) y = map(f, x) sum(y) endOutput in REPLjulia>@code_warntype foo(abs,x)

The situation is especially tricky because

@code_warntypefails to detect any type-stability issues. The reason is that@code_warntypeevaluates type stability under the assumption of specialization, which doesn't hold in our example: Julia bypasses specialization in the particular case wherefis not explicitly called. Specifically,fin our example only entersfooas an argument ofmap.To corroborate the absence of specialization, let's compare the original function with a modified version that explicitly calls

f.We can observe a significant reduction in time by adding

f(1), along with a decrease in memory allocations. Excessive allocations are a common symptom of type instability, as we'll demonstrate when exploring the subject.Nonetheless, explicitly calling the function to circumvent the no-specialization issue isn't optimal, since it introduces an unnecessary computation. Fortunately, alternative solutions exist to address the problem. One approach is to type-annotate

f, which provides Julia with a hint to specialize. Another solution is to wrap the function in a tuple and then call it. This ensures the identification of the function's type, as the information is inherent to the tuple's type.For the sake of completeness, we next outline all the approaches, including the previous method with an explicit function call.